Polymath Engineer Weekly #58

The world is changing, are you too?

Hello again.

Links of the week

PostgreSQL: No More VACUUM, No More Bloat

The PostgreSQL community, in their continued efforts to improve the system, introduced autovacuum - an automatic vacuuming process designed to alleviate the need for manual vacuuming. This was a significant step forward, but it was not a perfect solution. The autovacuum process, while automatic, still consumed substantial system resources. This is one of the reasons why Uber decided to migrate from PostgreSQL to MySQL and one of the 10 things that Richard Branson hates about PostgreSQL.

Further enhancements came with the implementation of Heap-Only Tuples (HOT) updates and microvacuum, both significant improvements that reduced the need for full table vacuums. However, despite these advancements, the VACUUM process still remained a resource-intensive operation. Furthermore, PostgreSQL tables remained prone to bloat, an issue that continues to plague many users today. This is the part of PostgreSQL that the team at OtterTune hates the most.

On Becoming a VP of Engineering, Part 2: Doing the Job

Strategy is also not one-and-done. There’s ongoing and often painful work to create adequate focus to actually implement the strategy. This is particularly hard on engineering teams, where we always have to balance multiple priorities: security, reliability, performance, UX, shipping new features, iterating on existing features, internal developer experience, maintainability/tech debt, quality, scaling, etc. A successful startup engineering team has to say no to tantalizing opportunities constantly. As a VP, a key part of the job is helping your teams gracefully say no, even when it may feel painful to the team, customers, or others in the company.

LLAMA 2: an incredible open-source LLM

At no point does LLAMA 2 feel like a complete project or one that is stopping anytime soon. In fact, the model likely has been trained for months and I expect the next one is in the works. The supporting text of the paper, e.g. the introduction and conclusion, carry a lot of the motivational load of the paper. Meta is leaning heavily into the lenses of trust, accountability, and democratization of AI via Open-Source. Democratization is the one that I am most surprised by, given the power inequities in AI development and usage.

How React 18 Improves Application Performance

React 18 has introduced concurrent features that fundamentally change the way React applications can be rendered. We'll explore how these latest features impact and improve your application's performance.

First I tried having the compiler mark send-receive pairs and leave hints for the runtime to fuse them into a single operation. That would let the channel runtime bypass the scheduler and jump directly to the other coroutine. This implementation requires about 118ns per switch, or 236ns per pulled value (38% faster). That's better, but it's still not as fast as I would like. The full generality of channels is adding too much overhead.

Next I added a direct coroutine switch to the runtime, avoiding channels entirely. That cuts the coroutine switch to three atomic compare-and-swaps (one in the coroutine data structure, one for the scheduler status of the blocking coroutine, and one for the scheduler status of the resuming coroutine), which I believe is optimal given the safety invariants that must be maintained. That implementation takes 20ns per switch, or 40ns per pulled value. This is about 10X faster than the original channel implementation. Perhaps more importantly, 40ns per pulled value seems small enough in absolute terms not to be a bottleneck for code that needs coro.Pull.

Leading vs. Managing in the Engineering World

This means that leaders can be found among senior engineers, junior engineers, project managers, architects, or even interns. What sets these individuals apart is their ability to inspire others, to communicate effectively, and to drive towards a shared vision. They often exhibit a strong understanding of the bigger picture and how their team’s work fits into it.

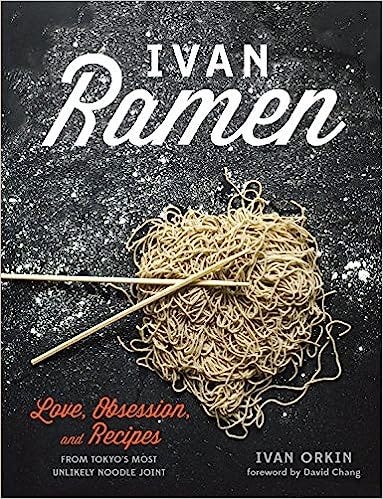

Book of the Week

Ivan Ramen: Love, Obsession, and Recipes from Tokyo's Most Unlikely Noodle Joint

Do you have any more links our community should read? Feel free to post them on the comments.

Have a nice week. 😉

Have you read last week's post? Check the archive.